Bauchwitz, B.1, Jastrzembski, T.2, Millwater, T.2, Gunzelmann, G.2, Niehaus, J.1, and Weyhrauch, P.1

Presented at the Military Health System Research Symposium (MHSRS), Kissimmee, FL (August 2018)

Background: Trauma care is crucial to the DoD, but current training may be non-optimal. Military medical providers typically receive classroom instruction in courses such as the Trauma Nurse Core Course and may have the opportunity to practice key trauma skills in high-fidelity simulations. However, both forms of training are costly and resource intensive, meaning that neither occurs as frequently as needed to properly maintain skills. Additionally, there is evidence that decision-making skills like the ones used in trauma management go through a period of proceduralization in which procedural representations of declarative knowledge are gradually formed through practice. A direct transition from classroom instruction to high-fidelity simulation may not optimally proceduralize skills because practice opportunities are too limited and because high-fidelity simulation may be too complex an environment for more novice learners. A less resource intensive medium fidelity synthetic task environment may give certain learners a better opportunity to sufficiently practice and proceduralize their skills.

A larger aim of our research program is to assess the effectiveness of different levels of simulator fidelity in training military medical personnel on trauma assessment skills. The planned study compares the effectiveness of two different styles of simulation on training initial trauma assessment skills to Air Force nurses. Participants in this study complete equivalent training tasks either on a manikin or on a lower fidelity screen-based simulator. Here we describe the process for modifying an existing screen-based simulator and generating content to represent the tasks in the lower-fidelity arm of this study.

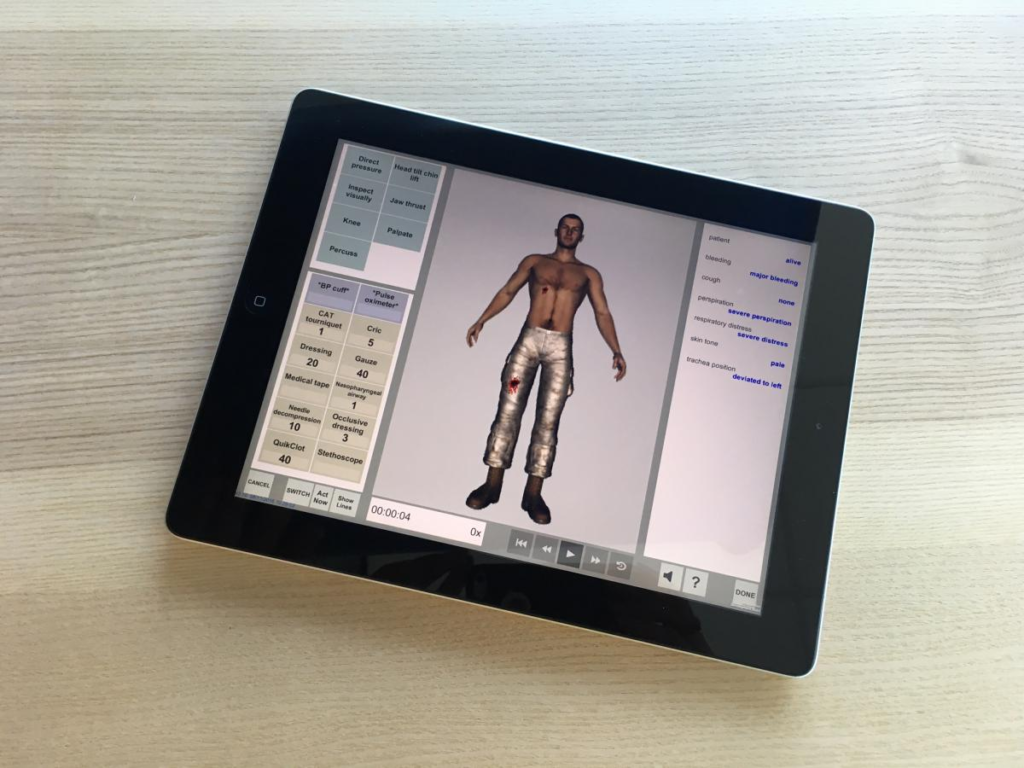

Methods: Our group previously developed the System for Trauma Assessment Training (STAT) screen-based simulator for training initial assessment skills in simple point-of-injury care scenarios. STAT represents virtual patients with state-based representations of injuries and simplified physiology models. It also includes a toolkit of tools and actions that trainees can use to collect diagnostics or perform interventions on the patient. When diagnostics are used, a status bar displays the value of relevant vital signs and physiological properties in text. STAT tracks performance metrics including which actions were taken as well as the ordering and timing of those actions. All elements of STAT simulations are data driven, meaning the tools, actions, injury patterns, physiology, and scoring mechanisms can be defined in text files that are loaded into the simulation.

Methods: Our group previously developed the System for Trauma Assessment Training (STAT) screen-based simulator for training initial assessment skills in simple point-of-injury care scenarios. STAT represents virtual patients with state-based representations of injuries and simplified physiology models. It also includes a toolkit of tools and actions that trainees can use to collect diagnostics or perform interventions on the patient. When diagnostics are used, a status bar displays the value of relevant vital signs and physiological properties in text. STAT tracks performance metrics including which actions were taken as well as the ordering and timing of those actions. All elements of STAT simulations are data driven, meaning the tools, actions, injury patterns, physiology, and scoring mechanisms can be defined in text files that are loaded into the simulation.

The initial version of STAT was intended for rapid point-of-injury scenarios with a small number of injuries and available tools. It included limited segmentation of the patient’s body (roughly 4-10 segments, depending on the scenario) and a small number of tools and actions that could be performed (roughly 20 total, depending on the scenario). The simulator-fidelity study defined five more complex scenarios requiring a full trauma assessment and the delivery of interventions beyond those available at point-of-injury. As a result, we had to adapt STAT to support more tools, more injuries, and a greater degree of body segmentation. To create these scenarios, we first defined all of the tools and actions that could be used in the manikin task and represented them in STAT. We identified 78 distinct actions that could be taken by the participant to interact with the patient. This large expansion in the number of represented actions required us to significantly revise our user interface. We segmented the toolkit into portions devoted to diagnostics, interventions, and notes. We additionally subdivided diagnostics into tools, actions and lab tests and subdivided interventions into tools, actions, and fluids. We further separated the user interface into individual tabs corresponding to the stages of the initial assessment (A-I), where tools and actions not relevant to a particular task were not shown in that tab. This representation was analogous to the layout of the cart used in the physical task, which organized tools by their role in particular stages of the initial assessment.

In addition to refining the representation of the available tools and actions, we found that it was inadequate to represent vital signs and diagnostics purely in text in a sidebar. First, such a representation required directing attention away from the patient, which was contrary to established requirements for training trauma assessment skills. Second, representing certain vital signs such as the respiratory rate or degree of chest rise in text eliminated any interpretation by the participant, a critical skill in trauma assessment. To address this, we placed vital sign status in a centrally located hovering text box that was visualized whenever the patient used a diagnostic tool. Only signs that would be displayed in text by relevant diagnostic equipment were displayed in this way. To support physiology properties requiring visual interpretation, we animated certain portions of the patient’s body such as the chest and eyelids. We did not animate portions of the patient that were not also animated in the manikin model, as we wanted to keep the screen-based task strictly lower fidelity compared to the manikin task. Additionally, physiological properties requiring auditory interpretation, like the heart and lung sounds were represented as sound files that played when an appropriate diagnostic was performed.

Beyond these user interface changes, we revised the scoring mechanisms to accommodate more complex scoring required for assessment of the study data. While the original point-of-injury scenarios assigned scoring to simple atomic actions, the study tasks required scoring on more complex sequence of actions and actions repeated at specific stages of the survey (for instance assigning points to the participant for applying a C-spine collar in one particular context but not another). We also had to support earning points for performing equivalent alternative actions without duplicating the scoring when both actions were performed. To do this, we added a number of hidden functions to the physiology of each patient which took unique values depending on specific action sequences that were used.

Results: We developed five new scenarios for a screen-based simulator for training initial trauma assessment in Air Force personnel. These scenarios included: (1) a 30-year old male blast victim with tension pneumothorax, open fracture to the right lower extremity, and facial lacerations; (2) a 23-year old male IED victim ejected from a Humvee with tension pneumothorax, depressed skull fracture, facial and chest burns, and a below knee right leg amputation; (3) a 23-year old female burn victim with facial burns, a tension pneumothorax, and gunshots to an upper extremity; (4) a 27-year old male fall victim with impaled shrapnel, fractured wrist, and pneumothorax; and (5) an unconscious 32-year old female blast victim ejected from a Humvee with facial burns, depressed skull fracture, open fracture to the left humerus, tension pneumothorax, and internal abdominal hemorrhage.

Conclusion: We successfully modified an existing screen-based point-of-injury trauma training simulator to represent more complex Air Force nursing tasks at higher echelons of care. These modifications increase the flexibility and functionality of our original STAT simulator and enable its use in a broader training domain. Future work is to use these scenarios to study the effectiveness of different levels of simulator fidelity in training trauma assessment skills.

1 Charles River Analytics

2 Air Force Research Laboratory

This work was supported by the Air Force Research Laboratory. The views, opinions, and/or findings contained in this report are those of the authors and should not be construed as an official Department of the Air Force position, policy, or decision unless so designated by other documentation.

For More Information

To learn more or request a copy of a paper (if available), contact Benjamin Bauchwitz.

(Please include your name, address, organization, and the paper reference. Requests without this information will not be honored.)